Introduction

You chose Flutter because the promise was compelling: one codebase, two platforms, faster feature delivery. Your developers are happy. Your product velocity is up.

Then you ask your QA team to write automated tests.

Appium does not work the way it does for native apps. XCUITest cannot see your widgets. Your Flutter integration tests run in a Dart environment that does not reflect real production conditions. The device lab you built for native testing is partially irrelevant. And every standard reference you find online for mobile test automation was written for native apps.

Flutter's cross-platform promise does not extend to testing. The same architectural decision that lets you share business logic across Android and iOS . the Skia and Impeller rendering engines drawing directly to a canvas . is precisely what breaks the standard automation stack.

This guide is the resource that cross-platform QA teams need. It covers what makes Flutter testing different, what a complete Flutter QA strategy looks like across all three testing layers, and how QApilot tests Flutter apps post-build from the compiled binary . the same way it handles native apps . without requiring source instrumentation, Dart-level hooks, or changes to your development workflow.

Why Flutter App Testing Is Fundamentally Different

The Custom Canvas Rendering Problem

Every standard mobile automation tool . Appium, XCUITest, UIAutomator, Espresso at the UI layer . works by traversing the platform's native accessibility tree. On Android, this is the View hierarchy exposed through UIAutomator. On iOS, it is the XCTest accessibility hierarchy. These trees describe every UI element: what it is, where it is, what it is called, how to interact with it.

Flutter does not use native widgets. It uses the Skia or Impeller rendering engine to draw every pixel of your UI directly onto a canvas. From the platform's perspective, your entire app is a single opaque drawing surface. The native accessibility tree sees one element: the Flutter view. There is no button, no input field, no list item . just a canvas.

This means every tool that relies on the native accessibility tree . which is most of them . cannot see inside a Flutter app without additional instrumentation. The tools are not broken. They are seeing exactly what the platform exposes. The platform exposes nothing useful.

What This Means for Your Test Stack

The practical implication is that you cannot drop Flutter into your existing native test infrastructure and expect it to work. Three common failure modes appear when teams try:

- Appium element identification fails: Appium's UIAutomator driver (Android) and XCTest driver (iOS) cannot identify Flutter widgets by standard means. Element locators that work perfectly on a native app return empty results on Flutter.

- Test stability degrades severely: Workarounds . like using coordinate-based tapping instead of element identification . produce tests that are fragile to any screen size variation, dynamic content, or layout change. Maintenance cost is extreme.

- Flutter-specific Appium plugins underperform: Community Flutter plugins for Appium exist but require build-time instrumentation, have incomplete widget coverage, and are significantly less stable than native Appium tests. They solve the access problem partially, not completely.

The Cross-Platform Promise Does Not Include Testing

Flutter teams often assume that because they have one codebase, they have one test suite. This is incorrect. Even in a Flutter app with identical business logic on Android and iOS, platform-specific behaviours require separate validation on each platform:

| Behaviour Area | Android | iOS | Test Impact |

|---|---|---|---|

| Navigation model | Back button / gesture navigation | Swipe-back gesture / navigation bar | Separate test paths per platform |

| Permission dialogs | Android permission request API | iOS privacy permission sheets | Platform-specific dialog handling required |

| Keyboard behaviour | System keyboard with back key | iOS keyboard with done/return logic | Form flow tests differ by platform |

| Notification handling | Notification drawer, background intent | Banner, lock screen, notification centre | Platform-specific push test cases |

| Deep linking | Android App Links / intent filters | Universal Links / custom URL schemes | Link handling tested separately |

| Background processing | WorkManager, Foreground Services | Background App Refresh, BGTaskScheduler | Background state tests platform-specific |

| File system access | Scoped storage (Android 10+) | Files app integration, sandboxing | File operation tests differ |

The Three Layers of Flutter App Testing

Layer 1: Widget Testing

Widget testing is Flutter's native unit-test-adjacent layer. Tests run in a simulated Flutter environment, rendering individual widgets or small widget trees in isolation, without a device or emulator. Widget tests are fast . hundreds can run in seconds . and are ideal for validating component logic, rendering behaviour, and state management.

What widget tests can validate: correct rendering of widgets given specific state, widget interactions (tap, scroll, input), state changes in response to user actions, and widget composition in small trees. What widget tests cannot validate: device-specific rendering differences, system integration (permissions, notifications, file access), network behaviour, and performance under real hardware constraints.

Widget tests are the fastest and least expensive layer of the Flutter testing pyramid. They should cover the majority of individual component behaviour . but they cannot substitute for higher-level testing against real devices.

Layer 2: Integration Testing

Flutter integration tests run on a real device or emulator, exercising the full app from within the Dart environment. They can test multi-screen flows, real navigation, and integration with some system features. Integration tests are more realistic than widget tests and significantly slower.

The critical limitation of Flutter integration tests is that they run inside the Flutter engine . they are not testing the app as a user would use it. They cannot fully simulate system-level interruptions (incoming calls, low memory warnings, background suspension), and they require access to the Dart source code or instrumentation hooks, which means they are tightly coupled to the development workflow.

Integration tests are valuable for validating core multi-screen flows in a development environment. They are not a substitute for end-to-end testing on real devices from the outside.

Layer 3: End-to-End Testing Against Real Devices

End-to-end Flutter testing against real devices . from the outside, without source access . is the hardest layer and the most valuable for production quality assurance. It is also the layer that breaks standard tooling.

This is the problem QApilot solves directly. By testing from the compiled binary rather than from the source, QApilot validates your Flutter app the way your users use it . on real hardware, with real OS behaviour, across real device configurations . without requiring any changes to your development or build workflow.

Understanding the Flutter Testing Challenge in Detail

The Accessibility Tree Gap

Flutter introduced semantics, an accessibility layer that can expose widget information to platform accessibility services when enabled. However, semantics in Flutter have important limitations for automated testing: they must be explicitly enabled and maintained by developers (many teams do not), they only expose a subset of widget properties, and the semantics tree does not map one-to-one to the widget tree in complex UIs.

Tools that rely on Flutter semantics for element identification are dependent on how well the development team has implemented accessibility throughout the app. In practice, this varies enormously . and it means that the accessibility-based approach to Flutter test automation introduces a coupling between test reliability and the app's accessibility implementation quality.

Platform Version and Device Variation Still Applies

A common misconception about Flutter is that because the rendering is consistent across the canvas, device and OS variation is less important than in native apps. This is partially true for visual rendering but entirely false for system integration.

Flutter apps still run on real OS versions with real permission models, real background process management, real keyboard behaviour, and real thermal and memory constraints. An Android 14 tightening of background process launch restrictions affects your Flutter app exactly as it affects a native app. An iOS update changing permission dialog behaviour affects your Flutter app's auth flow. A budget Android device with 2GB RAM imposes the same memory constraints on a Flutter app as on a native one.

Device health monitoring and cross-device compatibility testing are as important for Flutter as for any other app stack . the canvas rendering engine does not insulate your app from hardware reality.

Using QApilot for Flutter App Testing

Step 1: Upload Your Flutter Build . No Instrumentation Required

Upload your compiled Flutter binary to QApilot. For Android, upload the APK or AAB built with your standard release configuration. For iOS, upload the IPA. No Dart-level test hooks, no flutter_driver setup, no instrumentation packages, and no source access are required. QApilot tests your app as a compiled binary . the same build your users will receive.

This is a meaningful architectural distinction from tools that require source instrumentation. Instrumented builds can behave differently from production builds . the test environment is not the production environment. QApilot tests the production-equivalent binary, closing the gap between your test environment and what users actually receive.

Step 2: Automatic Knowledge Graph Construction for Flutter

When a Flutter build is uploaded, QApilot's autonomous crawler explores the app and builds the Knowledge Graph, the same semantic model used for native apps. The crawler interacts with the Flutter canvas using a combination of rendered output analysis, accessibility semantics where present, and interaction-outcome mapping to understand what each element does and how it fits into the app's flow.

The result is a semantic model of your Flutter app that does not depend on specific implementation details of the widget tree. Tests built on the Knowledge Graph reference what elements do . not how they are implemented . which makes them resilient to widget refactoring and UI changes.

Step 3: Platform-Specific Test Runs, Android and iOS Separately

Configure QApilot to run both your Android and iOS Flutter builds through the same test suite. Because the Knowledge Graph captures semantic behaviour rather than platform-specific implementation, the same test intent applies to both platforms . but QApilot executes against each platform separately, exposing platform-specific failures that a single-platform test run would miss.

The platform-specific behaviour matrix . navigation, permissions, keyboard, notifications, deep linking . is validated on each platform, with failures surfaced per-platform so your team can identify which issues are genuinely cross-platform and which are iOS-specific or Android-specific.

Step 4: Device Health Monitoring for Flutter Apps

Flutter's rendering engine adds CPU and memory overhead above the native baseline. The Skia and Impeller engines require processing resources to manage the canvas. Animation-heavy Flutter UIs can be particularly demanding on budget devices. QApilot's device health monitoring captures CPU, memory, storage, battery drain, and thermal throttling during Flutter test execution . the same metrics tracked for native apps, applied to the Flutter execution context.

Key Flutter-specific health signals to monitor: CPU load during animation sequences (Flutter's declarative animation model can be CPU-intensive on budget devices), memory growth across repeated screen navigations (widget lifecycle management can produce leaks if dispose methods are not implemented correctly), and thermal load during video or image-heavy Flutter screens.

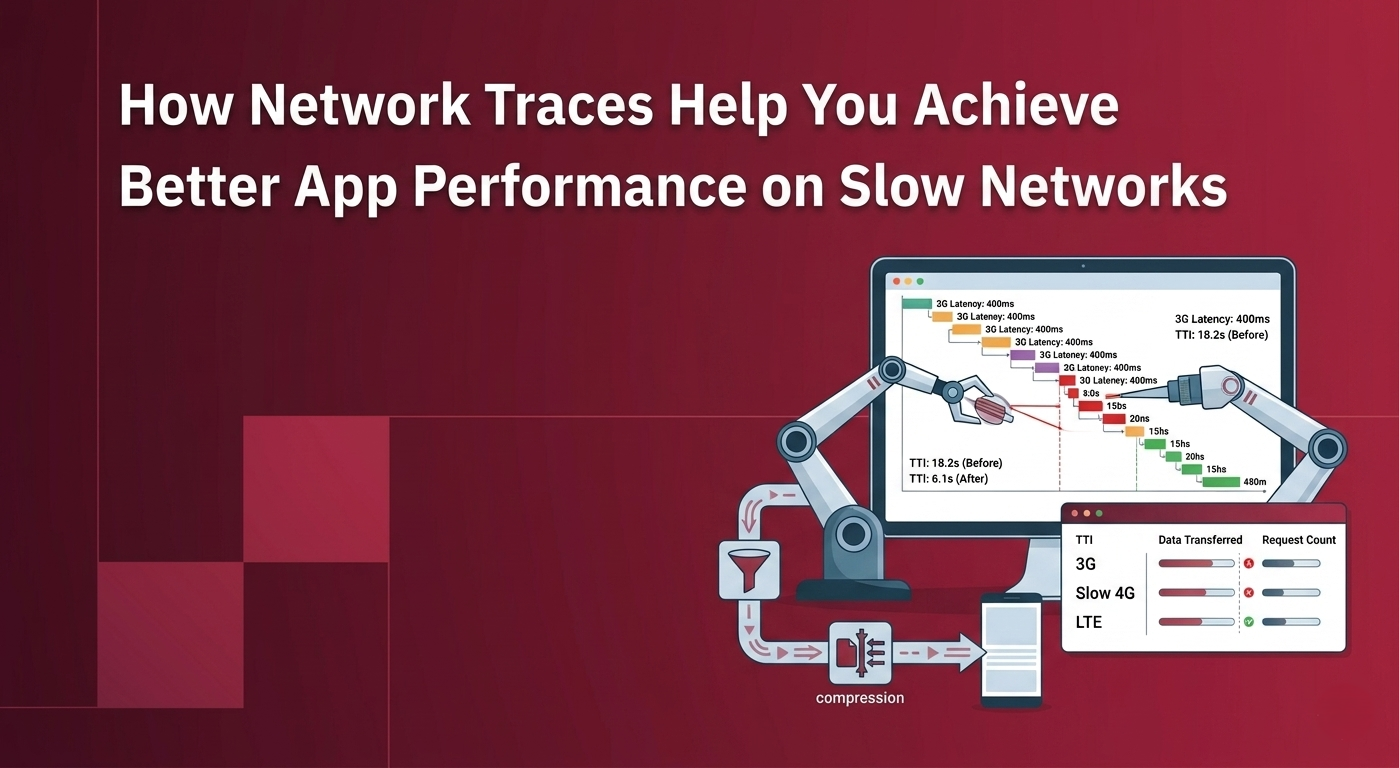

Step 5: Network Traces for Flutter Apps

Flutter apps make the same HTTP and HTTPS requests as native apps . using Dart's http library, dio, or other networking packages. QApilot's network traces feature captures the full request waterfall during Flutter test execution, including request timing, payload sizes, response codes, and sequential dependency chains.

Flutter apps often have an additional network performance consideration: the initial frame rendering delay. Flutter's first frame can be blocked by network requests that fetch configuration, feature flags, or user data before the UI becomes interactive. Network traces make this blocking behaviour visible . and frequently reveal opportunities to defer non-critical requests and reduce time-to-interactive significantly.

Step 6: CI/CD Integration for Flutter

Integrate QApilot into your Flutter CI/CD pipeline at the build stage. A typical Flutter pipeline runs flutter build apk and flutter build ipa to produce the test artifacts, then routes them to QApilot for Knowledge Graph comparison, self-healing, and test execution. Results return to the pipeline as pass/fail signals, with detailed reports available in QApilot's dashboard.

Recommended Flutter CI/CD gate structure:

| Pipeline Stage | What Runs | Duration | Gate Behaviour |

|---|---|---|---|

| On every commit | Widget tests (flutter test) | 2–5 minutes | Failure blocks merge |

| On every build | Integration tests on emulator + QApilot sanity on device | 15–25 minutes | Failure blocks build advancement |

| On release candidate | Full QApilot regression . Android + iOS, full device matrix | 30–60 minutes | Failure blocks release |

| Scheduled (weekly) | Performance, device health, and expanded device matrix runs | 2–4 hours | Results inform optimisation backlog |

Step 7: Flutter-Specific Reporting and Regression Tracking

QApilot's reporting surfaces Flutter test results with platform breakdowns . failures are attributed to Android-specific, iOS-specific, or cross-platform causes. Device health metrics are attached to each test run, enabling correlation between Flutter performance degradation and specific devices or OS versions.

Track regression trends across Flutter builds: did the latest package update increase memory consumption? Did the new animation introduce thermal throttling on budget devices? Did the API change add a sequential request to the startup critical path? These questions are answerable from QApilot's trend data without manual investigation.

Flutter Testing Layer Breakdown

| Testing Layer | Runs On | Access Required | Coverage | QApilot Role |

|---|---|---|---|---|

| Widget tests | Simulated Flutter env | Dart source | Component behaviour, state, rendering | Not applicable . runs in development environment |

| Integration tests | Device or emulator | Dart source + flutter_driver | Multi-screen flows, basic integration | Complementary . QApilot adds post-build validation |

| End-to-end on real device | Physical device | Compiled binary only | Full user journeys, platform integration, real device conditions | Core QApilot capability . no source required |

| Cross-platform validation | Android + iOS real devices | Compiled binary for each platform | Platform-specific behaviour differences | QApilot runs both platforms from same test intent |

| Performance and health | Physical devices (diverse) | Compiled binary | CPU, memory, thermal, battery under real conditions | QApilot device health monitoring, fully automatic |

| Network performance | Physical devices on throttled network | Compiled binary | Request waterfall, payload sizes, slow network behaviour | QApilot network traces, fully automatic |

Real-World Optimisation Case Study

The Scenario

A fintech Flutter app, a personal finance management tool supporting both Android and iOS was experiencing significant QA friction as the team scaled. Their QA process relied on manual testing supplemented by a small Flutter integration test suite. Each release cycle took fourteen days: three days of integration test runs, five days of manual testing across six devices, three days of bug fixing and retesting, and three days of sign-off processes.

The team was releasing on a four-week cycle but taking fourteen days of QA per release, leaving only two weeks for development. The engineering lead identified QA cycle time as the primary constraint on shipping velocity.

The Investigation

The team adopted QApilot and uploaded both the Android APK and iOS IPA from their release candidate build. Knowledge Graph construction completed in eight minutes for the Android build and eleven minutes for the iOS build. Autonomous crawling identified 94 distinct user flows across the app . significantly more than the 23 flows covered by their existing integration test suite.

Initial test runs revealed three classes of previously undetected issues. First, on budget Android devices (3GB RAM), the portfolio overview screen caused memory allocation failures after four minutes of use . a Flutter-specific issue caused by image widgets not calling dispose when removed from the widget tree. Second, the iOS transfer flow had a keyboard handling bug where tapping a numeric input field on iOS 17 with the predictive text bar active caused the input to receive a character offset error . Android was unaffected. Third, app startup on 3G made eleven sequential network requests before the dashboard became interactive . a 23-second startup time on slow networks.

The Solution

Three targeted changes addressed the discovered issues. The memory issue was resolved by implementing proper widget disposal in the portfolio image gallery . a two-line fix that reduced memory growth from +18MB per screen visit to under 1MB. The iOS keyboard bug was identified as a Flutter framework interaction with the iOS 17 predictive text API and resolved with a platform-specific input configuration. The startup network performance was improved by parallelising the eleven sequential requests (four were actually independent) and deferring three analytics calls until after the dashboard was interactive . reducing 3G startup time from 23 seconds to 7 seconds.

The Results

| Metric | Before QApilot | After QApilot |

|---|---|---|

| QA cycle time per release | 14 days | 3 days |

| Devices in validated matrix | 6 devices | 42 devices |

| User flows with automated coverage | 23 flows | 94 flows |

| Memory allocation failures on 3GB devices | Frequent (undetected) | 0 (resolved) |

| iOS-specific keyboard bug | Undetected | Caught and resolved pre-release |

| App startup on 3G | 23 seconds | 7 seconds (70% improvement) |

| Release cadence | 4 weeks | 2 weeks |

The fourteen-day QA cycle became a three-day cycle, not by reducing quality, but by replacing manual testing of six devices with automated testing across forty-two devices in parallel. The team doubled their release cadence within two months of adoption.

Best Practices for Flutter App Testing

Implement Accessibility Semantics Deliberately

Even if you are not relying on accessibility semantics as your primary test element identification strategy, implementing semantics throughout your Flutter widget tree improves test reliability across every tool you use. Use the Semantics widget to label interactive elements explicitly . buttons, form fields, navigation items, and key content elements. Treat accessibility implementation as test infrastructure, not an optional feature.Test Each Platform Separately . Always

Never assume that passing on Android means passing on iOS, or vice versa. Platform-specific behaviour in navigation, permissions, keyboard handling, notifications, and background processing means that a Flutter app requires independent validation on each platform. Structure your CI/CD to always run both platforms on every release candidate, and treat platform-specific failures as first-class bugs, not minor variations.Monitor Widget Lifecycle for Memory Leaks

Flutter's widget lifecycle . build, dispose . is the most common source of memory leaks in Flutter apps. When a widget is removed from the tree, its dispose method must release resources: listeners, controllers, animation objects, and stream subscriptions. Failure to do so causes memory to grow continuously across screen navigations. Device health monitoring with QApilot's memory metrics makes these leaks visible during automated testing . catching them before they manifest as production crashes on low-RAM devices.Test on Real Budget Android Devices

Flutter's rendering engine has meaningfully higher overhead than native UI frameworks on budget hardware. The Skia canvas pipeline consumes CPU and GPU resources that may be acceptable on a flagship device but problematic on a 2–3GB RAM Android device. The only way to know whether your Flutter app performs acceptably for budget device users is to test on budget devices. Do not assume that passing on the Pixel 8 or Samsung S24 means your app is safe for users on Redmi Note or Galaxy A series devices.Treat Startup Performance as a First-Class Test Case

Flutter app startup time is a common performance pain point. The Flutter engine initialisation, Dart VM startup, and initial frame rendering add baseline overhead that native apps do not have. Combined with network requests that block the first meaningful paint, Flutter app startup on slow networks can be significantly longer than expected. Test startup performance on 3G with QApilot's network traces on every release candidate and set a Time to Interactive budget . for example, under 5 seconds on LTE and under 12 seconds on 3G . that blocks release if exceeded.Run Flutter Widget Tests and End-to-End Tests as Complements, Not Alternatives

Flutter widget tests are fast and valuable for component-level validation. End-to-end tests on real devices are slow and valuable for system-level validation. Neither can replace the other. Widget tests catch component regressions in seconds; end-to-end tests catch integration failures that widget tests cannot see. Build and maintain both layers . the pyramid structure keeps total suite run time manageable while providing comprehensive coverage.Validate Flutter Upgrades Before Adoption

Flutter version upgrades . from the Flutter framework itself, from Dart SDK upgrades, or from major plugin updates . can introduce rendering behaviour changes, performance characteristics shifts, or breaking API changes that affect test results. Treat Flutter upgrades as you would a major OS version change: run your full QApilot regression suite against the upgraded build before upgrading production, and budget time for remediation if issues surface.

Tools and Integrations

QApilot's Flutter Testing Features

- Post-build binary testing: test compiled Flutter APK and IPA without source instrumentation or build-time hooks

- Cross-platform execution: same test intent runs on Android and iOS . failures attributed per platform

- Knowledge Graph for Flutter: semantic element model that survives widget refactoring and UI changes

- AI self-healing: tests adapt automatically when Flutter widget structure changes . no manual locator updates

- Device health monitoring: CPU, memory, thermal throttling, battery drain tracked during Flutter test execution, see device health guide

- Network traces: full request waterfall during Flutter test execution across simulated 3G, 4G, and LTE profiles, see network traces guide

- CI/CD integration: connects to Flutter build pipelines . GitHub Actions, Bitrise, CircleCI, Fastlane

- Real device cloud: integrates with BrowserStack, HeadSpin, and LambdaTest for access to physical Flutter-capable devices

Complementary Tools

flutter test: Flutter's built-in widget and unit test runner. Fast, source-dependent, ideal for the base of the testing pyramid. Not suitable for end-to-end testing on real devices.

flutter_driver / integration_test package: Flutter's official integration testing library. Supports multi-screen flows on real devices but requires Dart source access and is tightly coupled to the development environment.

Maestro: A mobile UI testing framework with Flutter support via accessibility semantics. Requires semantics to be implemented in the Flutter codebase. Simpler scripting than Appium but subject to the same semantics coverage limitations.

Firebase Test Lab: Google's cloud testing service for Android apps including Flutter. Provides access to real devices and automated pre-launch reports. Integrates with QApilot for expanded test coverage beyond the pre-launch report.

Patrol: A Flutter-native integration testing library built on top of the integration_test package that adds native interaction capabilities (handling system dialogs, notifications). Useful at the integration test layer; does not cover end-to-end binary testing.

Quick Reference: Pre-Ship Flutter QA Checklist

Before shipping your Flutter app, verify:

- Flutter widget tests passing across all critical components

- Integration tests passing on both Android emulator and iOS simulator

- QApilot end-to-end tests passing on Android - minimum one budget, one mainstream, one flagship device

- QApilot end-to-end tests passing on iOS - minimum two iOS versions (latest and previous major)

- Platform-specific flows validated separately: navigation, permissions, keyboard, notifications, deep links

- Memory leak check: no memory growth pattern across repeated screen navigations on 3GB RAM devices

- Thermal throttling not occurring during normal use on budget devices

- App startup time within budget on 3G network conditions (recommend under 12 seconds)

- No sequential request chains blocking UI interactivity at startup

- Flutter version and all plugin versions locked to validated versions for this release

- Device health metrics within performance budgets for each device category

- Network traces reviewed for new requests added in this release cycle

- Self-healing events reviewed and approved for any widget changes in this build

- CI/CD pipeline ran successfully producing green results on release candidate

Summary

Flutter's cross-platform promise is real . for development. For testing, it introduces a specific set of challenges that standard native testing tools and workflows are not equipped to handle. The custom canvas rendering engine breaks accessibility-tree-based automation. The single codebase still requires per-platform test validation. The Flutter rendering overhead requires testing on real budget devices. And the cross-platform nature of the app makes it tempting to skip the platform-specific testing that production quality demands.

A complete Flutter QA strategy layers widget tests, integration tests, and post-build end-to-end testing on real devices . and it treats the Android and iOS platforms as separate validation targets that share test intent but require independent execution. QApilot makes that end-to-end layer practical: post-build testing from compiled binaries, automatic Knowledge Graph construction, AI self-healing for widget changes, device health monitoring, and network trace analysis . all without source instrumentation and all integrated into your existing CI/CD pipeline.

Flutter lets your developers write once. QApilot lets your QA team validate everywhere . and ship with confidence on both platforms.

Read next: How AI Self-Healing Tests Eliminate the Mobile Test Maintenance Crisis

Frequently Asked Questions

Q1: Why can't standard tools like Appium test Flutter apps?

Standard mobile automation tools work by traversing the platform's native accessibility tree . the View hierarchy on Android and the XCTest accessibility hierarchy on iOS. Flutter does not use native widgets; it renders every pixel directly onto a canvas using the Skia or Impeller engine. From the platform's perspective, the entire Flutter UI is a single opaque drawing surface. Standard tools see one element . the Flutter view . rather than the individual widgets inside it. This is why element identification that works perfectly on native apps fails on Flutter without additional instrumentation.

Q2: Does QApilot require source code access to test Flutter apps?

No. QApilot tests Flutter apps post-build from the compiled binary . the same APK or IPA that your users receive. No Dart source code, no flutter_driver instrumentation, no build-time hooks, and no modifications to your Flutter development workflow are required. This is architecturally significant: you are testing the production-equivalent binary, not an instrumented build that may behave differently from what ships. Full details are at the QApilot for Flutter page.

Q3: If Flutter has one codebase, do I need to test on both Android and iOS?

Yes, and this is one of the most important points for Flutter QA teams. Shared business logic does not mean identical platform behaviour. Navigation models, permission systems, keyboard handling, notification APIs, deep linking implementations, background processing, and file system access all differ between Android and iOS . and these differences manifest as real user experience differences and real bugs that affect only one platform. Testing only on Android and assuming iOS is equivalent is one of the most common and costly mistakes in Flutter QA.

Q4: How do I handle widget tree changes without breaking tests?

QApilot's AI self-healing engine handles widget-level changes the same way it handles native app element changes . using a multi-signal matching approach that identifies elements by semantic role, text content, visual appearance, and interaction context, rather than by widget tree path or implementation-specific identifiers. When your team refactors widgets, reorganises screens, or updates the component library, QApilot heals affected tests automatically at high confidence and flags ambiguous changes for human review. For a detailed explanation of how self-healing works, see the AI self-healing guide.

Q5: What are the most common Flutter-specific performance problems found in testing?

Four Flutter-specific performance issues appear most frequently in device health and network trace analysis. First, widget disposal memory leaks . widgets removed from the tree without calling dispose, causing memory to grow continuously across screen navigations. Second, animation CPU load on budget devices . Flutter's declarative animation model can be computationally expensive when many animations run simultaneously on devices with limited GPU resources. Third, first-frame blocking by network requests . the initial Flutter frame is sometimes blocked by sequential network calls that could be parallelised or deferred. Fourth, excessive widget rebuilds . setState called too broadly triggers re-rendering of large widget subtrees, causing unnecessary CPU load during user interactions.

Q6: What Flutter versions and build configurations does QApilot support?

QApilot supports standard Flutter release builds for both Android (APK and AAB) and iOS (IPA) across Flutter stable channel versions. Profile and debug builds are not required . QApilot tests the same release binary your users receive. For specific version compatibility and configuration requirements, see the QApilot Flutter Testing documentation.

Q7: How does QApilot's Flutter testing integrate with CI/CD pipelines?

QApilot integrates with Flutter CI/CD pipelines through standard build artifact upload. Your existing pipeline builds the Flutter APK and IPA using flutter build commands, then uploads the artifacts to QApilot via API or CLI. QApilot performs Knowledge Graph comparison, self-healing, and test execution, returning results to the pipeline as structured pass/fail signals. Integration is supported for GitHub Actions, Bitrise, CircleCI, Fastlane, and other common Flutter CI/CD environments. Results include per-platform breakdowns, device health reports, and network trace summaries . all linked from the pipeline output.

References

QApilot for Flutter

Flutter Testing Documentation - Flutter.dev

Integration Testing with Flutter - Flutter.dev

Flutter Performance Best Practices - Flutter.dev

Maestro Flutter Testing